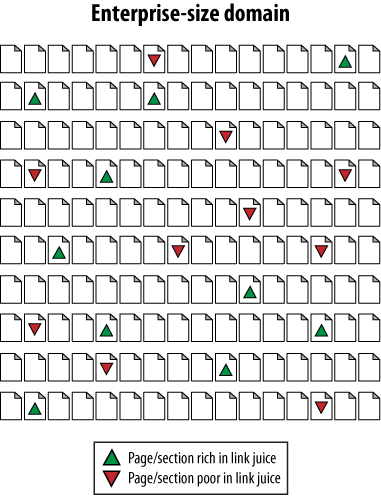

4. Example: Fixing an Internal Linking Problem

Enterprise sites range between 10,000 and 10 million pages in

size. For many of these types of sites, an inaccurate distribution of

internal link juice is a significant problem. Figure 3 shows how this can

happen.

Figure 3 is an

illustration of the link juice distribution issue. Imagine that each of

the tiny pages represents between 5,000 and 100,000 pages in an

enterprise site. Some areas, such as blogs, articles, tools, popular

news stories, and so on, might be receiving more than their fair share

of internal link attention. Other areas—often business-centric and

sales-centric content—tend to fall

by the wayside. How do you fix it? Take a look at Figure 4.

The solution is simple, at least in principle. Have the link-rich

pages spread the wealth to their link-bereft brethren. As easy as this

looks, in execution it can be incredibly complex. Inside the

architecture of a site with several hundred thousand or a million pages,

it can be nearly impossible to identify link-rich and link-poor pages,

never mind adding code that helps to distribute link juice

equitably.

The answer, sadly, is labor-intensive from a programming

standpoint. Enterprise site owners need to develop systems to track

inbound links and/or rankings and build bridges (or, to be more

consistent with Figure 4, spouts) that

funnel juice between the link-rich and link-poor.

An alternative is simply to build a very flat site architecture

that relies on relevance or semantic analysis (several

enterprise-focused site search and architecture firms offer these). This

strategy is more in line with the search engines’ guidelines (though

slightly less perfect) and is certainly far less labor-intensive.

Interestingly, the rise of massive weight given to domain

authority over the past two to three years appears to be an attempt by

the search engines to overrule potentially poor internal link structures

(as designing websites for PageRank flow really doesn’t serve users

particularly well), and to reward sites that have massive authority,

inbound links, and trust.

5. Server and Hosting Issues

Thankfully, few server or web hosting dilemmas affect the practice

of search engine optimization. However, when overlooked, they can spiral

into massive problems, and so are worthy of review. The following are

server and hosting issues that can negatively impact search engine

rankings:

Server timeouts

If a search engine makes a page request that isn’t served

within the bot’s time limit (or that produces a server timeout

response), your pages may not make it into the index at all, and

will almost certainly rank very poorly (as no indexable text

content has been found).

Slow response times

Although this is not as damaging as server timeouts, it

still presents a potential issue. Not only will crawlers be less

likely to wait for your pages to load, but surfers and potential

linkers may choose to visit and link to other resources because

accessing your site becomes a problem.

Shared IP addresses

Lisa Barone wrote an

excellent post on the topic of shared IP addresses back in

March 2007. Basic concerns include speed, the potential for having

spammy or untrusted neighbors sharing your IP address, and

potential concerns about receiving the full benefit of links to

your IP address (discussed in more detail at http://www.seroundtable.com/archives/002358.html).

Blocked IP addresses

As search engines crawl the Web, they frequently find entire

blocks of IP addresses filled with nothing but egregious web spam.

Rather than blocking each individual site, engines do occasionally

take the added measure of blocking an IP address or even an IP

range. If you’re concerned, search for your IP address at Bing

using the IP:address query.

Bot detection and handling

Some sys admins will go a bit overboard with protection and

restrict access to files to any single visitor making more than a

certain number of requests in a given time frame. This can be

disastrous for search engine traffic, as it will constantly limit

the spiders’ crawling ability.

Bandwidth and transfer limitations

Many servers have set limitations on the amount of traffic

that can run through to the site. This can be potentially

disastrous when content on your site becomes very popular and your

host shuts off access. Not only are potential linkers prevented

from seeing (and thus linking to) your work, but search engines

are also cut off from spidering.

Server geography

This isn’t necessarily a problem, but it is good to be aware

that search engines do use the location of the web server when

determining where a site’s content is relevant from a local search

perspective. Since local search is a major part of many sites’

campaigns and it is estimated that close to 40% of all queries

have some local search intent, it is very wise to host in the

country (it is not necessary to get more granular) where your

content is most relevant.